|

5/24/2023 0 Comments Covariance matrix

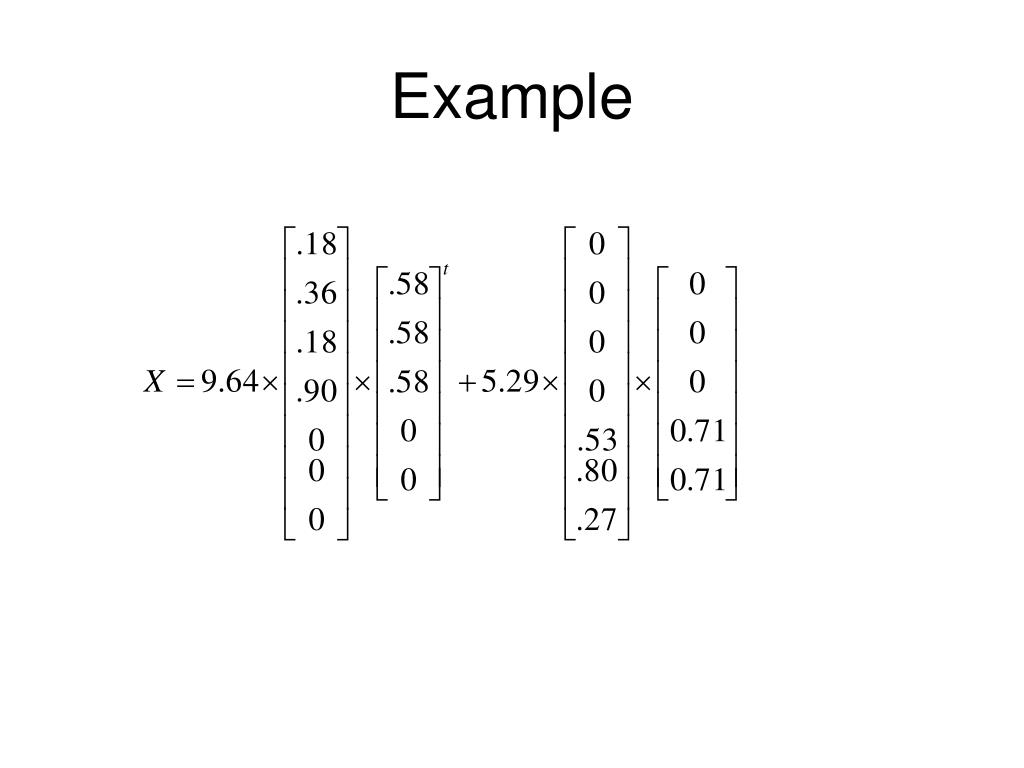

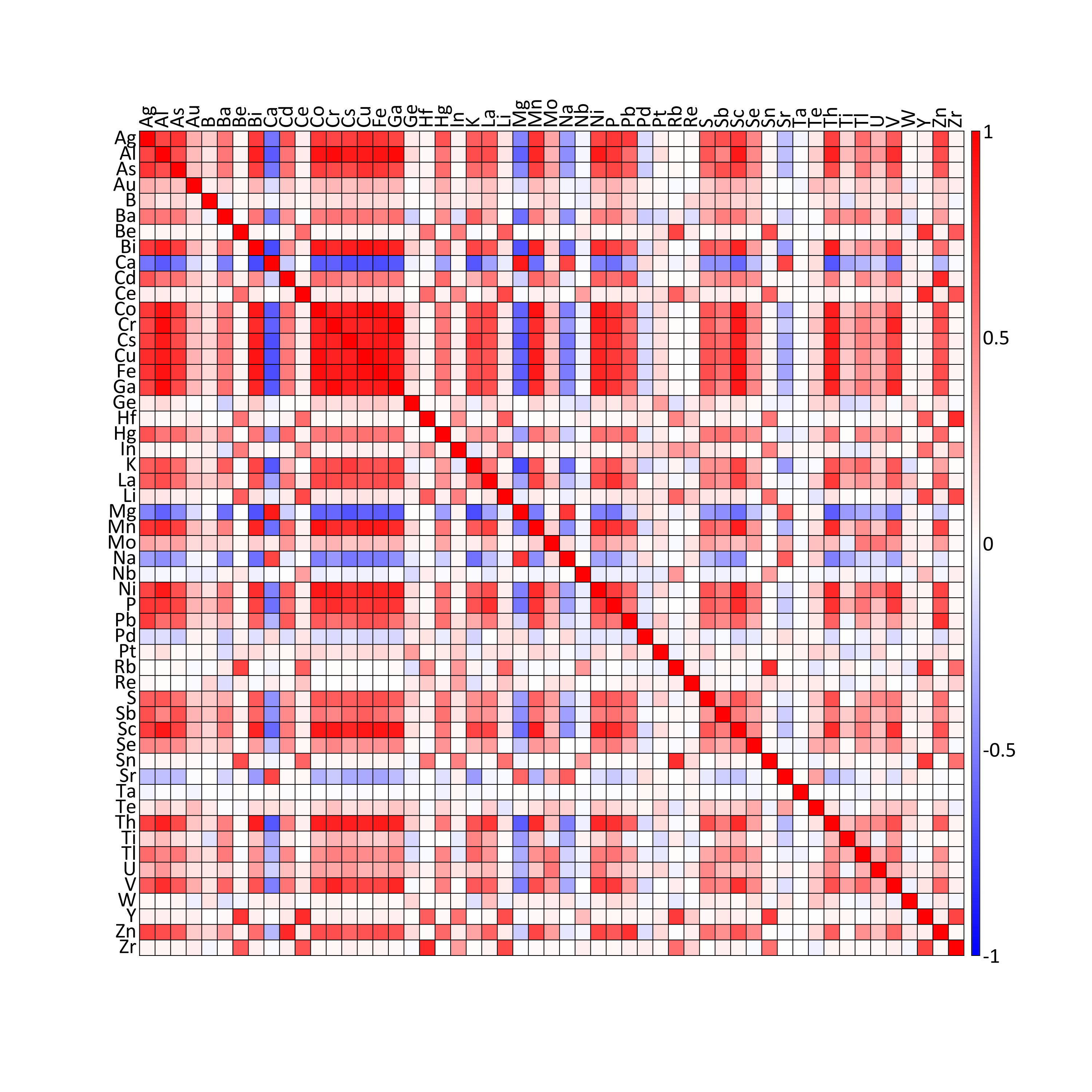

Let us assume (or “pretend”) it came from a normal distribution, and let us ask the following questions: Given the above intuition, PCA already becomes a very obvious technique. This, in simple words, means that any covariance matrix could have been the result of transforming the data using a coordinate-wise scaling followed by a rotation. There is a theorem in linear algebra, which says that any symmetric matrix can be represented as: And we should not really care - those two are identical. When we see a unit covariance matrix we really do not know, whether it is the “originally symmetric” distribution, or a “rotated symmetric distribution”. Correspondingly, for all rotation matrices. For example, if is just a rotation by some angle, the transformation does not affect the shape of the distribution at all. There can be many different transformations of the symmetric Gaussian which result in the same distribution shape. Note that we do not know the actual, and it is mathematically totally fair. More generally, if we have any data, then, when we compute its covariance to be, we can say that if our data were Gaussian, then it could have been obtained from a symmetric cloud using some transformation, and we just estimated the matrix, corresponding to this transformation. The logic works both ways: if we have a Gaussian distribution with covariance, we can regard it as a distribution which was obtained by transforming the symmetric Gaussian by some, and we are given. This is exactly the Gaussian distribution with covariance. What is the distribution of ? Just substitute into (1), to get: We will get the following new cloud of points : Suppose that, for the sake of this example, scales the vertical axis by 0.5 and then rotates everything by 30 degrees. Now let us apply a linear transformation to the points, i.e. We know from above that the likelihood of each point in this sample is Let us take a sample from it, which will of course be a symmetric, round cloud of points: Transforming the Symmetric GaussianĬonsider a symmetric Gaussian distribution, i.e. You will see where it will lead us in a moment. Much simpler, isn't it? Finally, let us define the covariance matrix as nothing else but the parameter of the Gaussian distribution. Now, the definition of the (centered) multivariate Gaussian looks as follows: ) and refrain from writing out the normalizing constant. To simplify the math a bit, we will limit ourselves to the centered distribution (i.e. We say that the vector has a normal (or Gaussian) distribution with mean and covariance if:

Namely, from the the definition of the multivariate Gaussian distribution: The best way to truly understand the covariance matrix is to forget the textbook definitions completely and depart from a different point instead. This post aims to show that, illustrating some curious corners of linear algebra in the process. In reality, though, there are some very good and quite intuitive reasons for why the covariance matrix appears in various techniques in one or another way. In one place you would have to take its inverse, in another - compute the eigenvectors, or multiply a vector by it, or do something else for no apparent reason apart from "that's the solution we came up with by solving an optimization task". Later on the covariance matrix would pop up here and there in seeminly random ways. The textbook would usually provide some intuition on why it is defined as it is, prove a couple of properties, such as bilinearity, define the covariance matrix for multiple variables as, and stop there. It is defined as a useful "measure of dependency" between two random variables: Most textbooks on statistics cover covariance right in their first chapters. An abridged version of this text is also posted on Quora. Otherwise it will probably make no sense. Basic linear algebra, introductory statistics and some familiarity with core machine learning concepts (such as PCA and linear models) are the prerequisites of this post.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed